Umbra: A Visual Analysis Approach for Defense Construction Against Inference Attacks on Sensitive Information

Umbra: A Visual Analysis Approach for Defense Construction Against Inference Attacks on Sensitive Information

Abstract

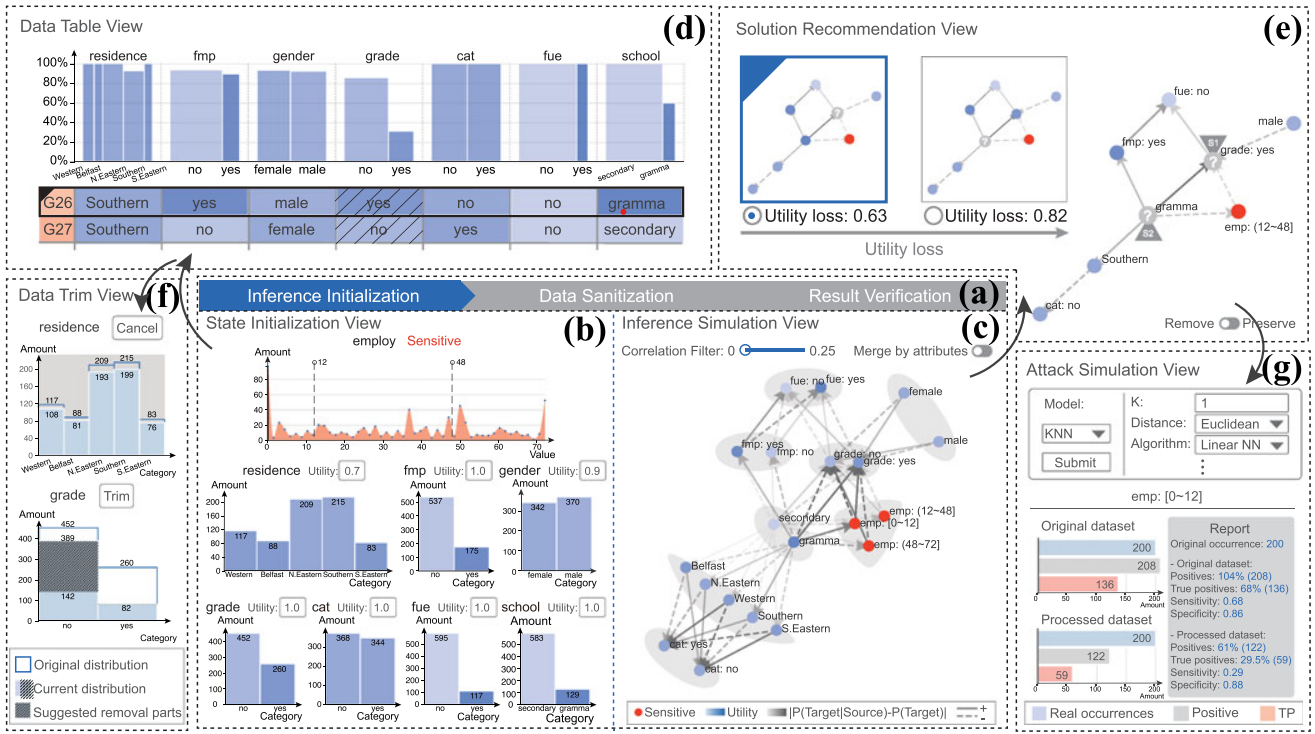

Collecting and analyzing anonymous personal information is required as a part of data analysis processes, such as medical diagnosis and restaurant recommendation. Such data should ostensibly be stored so that specific individual information cannot be disclosed. Unfortunately, inference attacks—integrating background knowledge and intelligent models—hinder classic sanitization techniques like syntactic anonymity and differential privacy from exhaustively protecting sensitive information. As a solution, we introduce a three-stage approach empowered within a visual interface, which depicts underlying inference behaviors via a Bayesian Network and supports a customized defense against inference attacks from unknown adversaries. In particular, our approach visually explains the process details of the underlying privacy preserving models, allowing users to verify if the results sufficiently satisfy the requirements of privacy preservation. We demonstrate the effectiveness of our approach through two case studies and expert reviews.